Ignis AI: De-Risking a Pre-Revenue AI Product

Context

Ignis was building an AI-powered behavioral assessment platform to help companies improve hiring decisions through its proprietary PowerSkills assessments – which sought to assess key soft skills important in any workplace.

My Role

- Founding Senior Director of Product Design at 0→1 AI hiring startup.

- Built and led a 4 person design + research team (2 senior designers, 1 contractor, 1 UXR).

- Initiated and oversaw 55 participant mixed-method research program (15 interviews, 16 concept tests, 49 survey responses).

- Iterated and finalized recruiter results product in 4 weeks based on live testing.

- Provided data and insight to kill multiple planned product lines (L&D + internal mobility) based on weak signal.

Where we started

- Pre-product

- Pre-revenue

- Under runway pressure

- Engineering-heavy initially (infra build first)

The Challenge

Validate real business demand and trust in AI assisted hiring tools, especially our under development PowerSkills assessment. We wanted to ensure we wouldn’t build an impressive but non-adopted product.

Core Strategic Question

How do you create adoption for an AI-driven hiring assessment in a market that distrusts AI and has a slow validation loop to determine if a hire was successful?

Three explicit business risks emerged

- We might not be solving a critical pain.

- We might build the wrong MVP.

- Recruiters might not trust AI-generated applicant scoring.

My role was to architect a validation system to systematically de-risk all three and lead my team to execute.

Phase I: Validating Real Pain (8 Interviews)

Participants

Director/VP-level HR and TA leaders across tech, finance, retail, healthcare.

Participants

Director/VP-level HR and TA leaders across tech, finance, retail, healthcare.

What We Learned

1. Time + Volume Overwhelm

Recruiters were buried in applications — especially with the rise of AI-generated resumes.

2. Trust & Bias Concerns

AI was seen as promising but risky:

- “How is this score calculated?”

- “Can I override it?”

- “Is it biased?”

- “Will it work internationally?”

3. Values Alignment > Technical Skill

Across interviews, hiring leaders prioritized:

- Long-term viability

- Company values alignment

- Behavioral traits

It’s not that technical skills weren’t important, it’s that they felt easier and more efficient to assess. Determining soft skills fit was often challenging and time consuming.

Phase II: Quantifying Opportunity Gaps (49 Survey Responses)

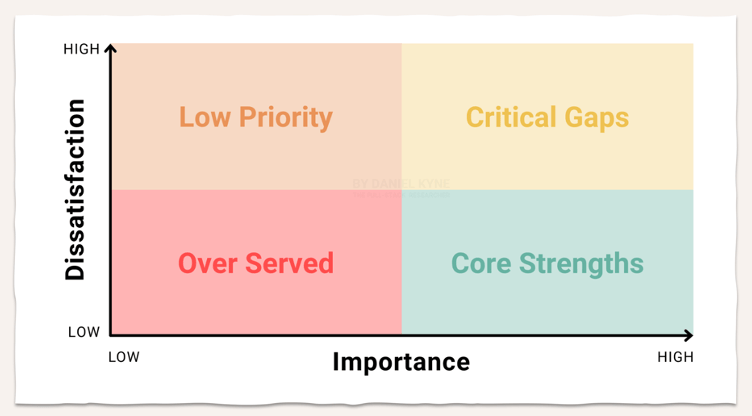

To avoid building for anecdotes, we ran an opportunity gap survey and mapped the results like so:

For each pain point

- Importance (1–5)

- Satisfaction with current solution (1–5)

High importance + High dissatisfaction = MVP priority.

Outcome

- Hiring workflow pain validated as high urgency.

- L&D and internal mobility did not surface as burning needs.

- Entire product lines were deprioritized.

This significantly narrowed MVP scope and reduced distraction.

Trust as the Central Adoption Constraint

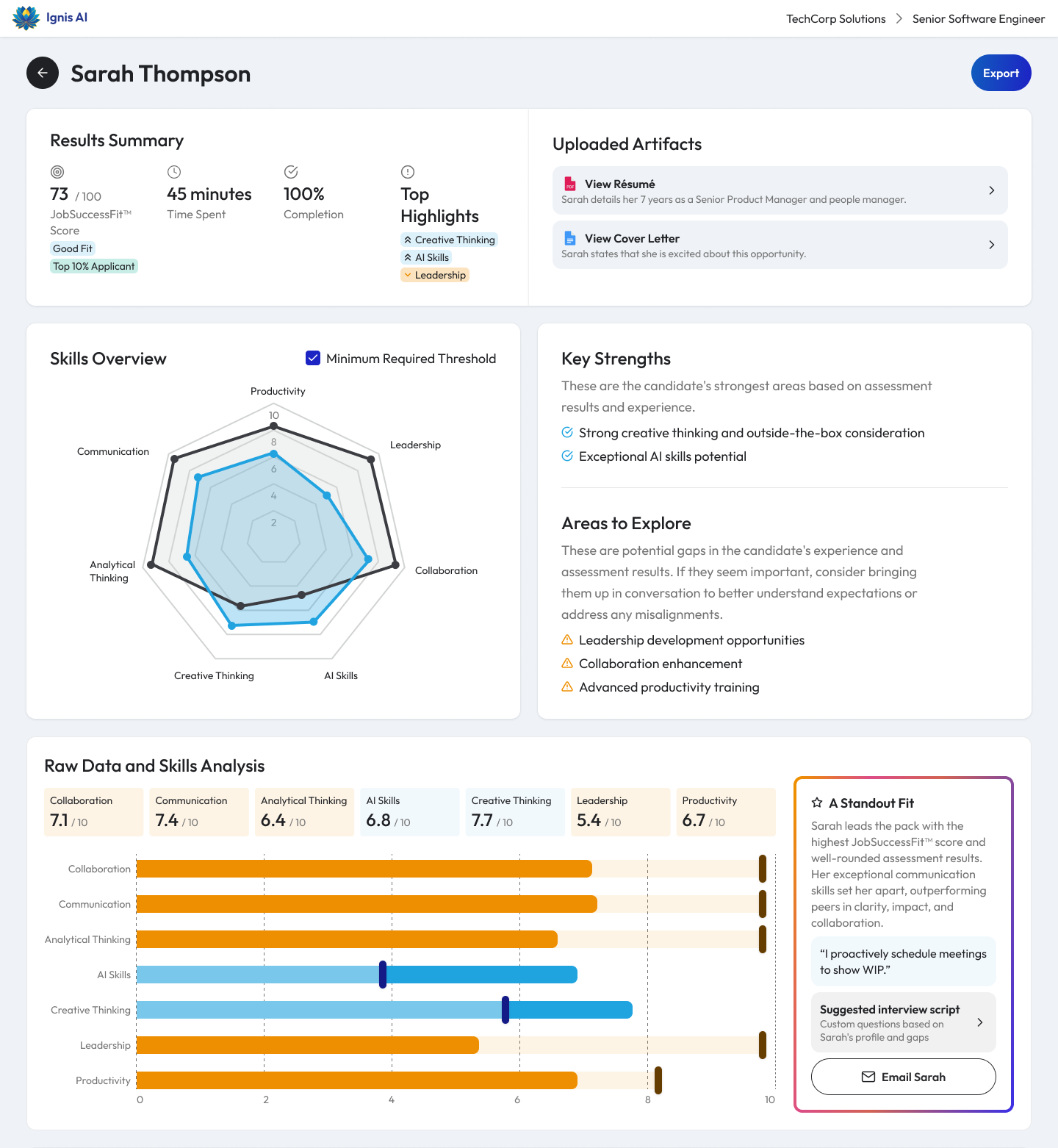

The most important insight: Recruiters were open to AI, but only as decision support, not decision maker. Trust would need to be built over time. So we ran structured concept testing to understand whether our applicant scoring system gave actionable results to recruiters.

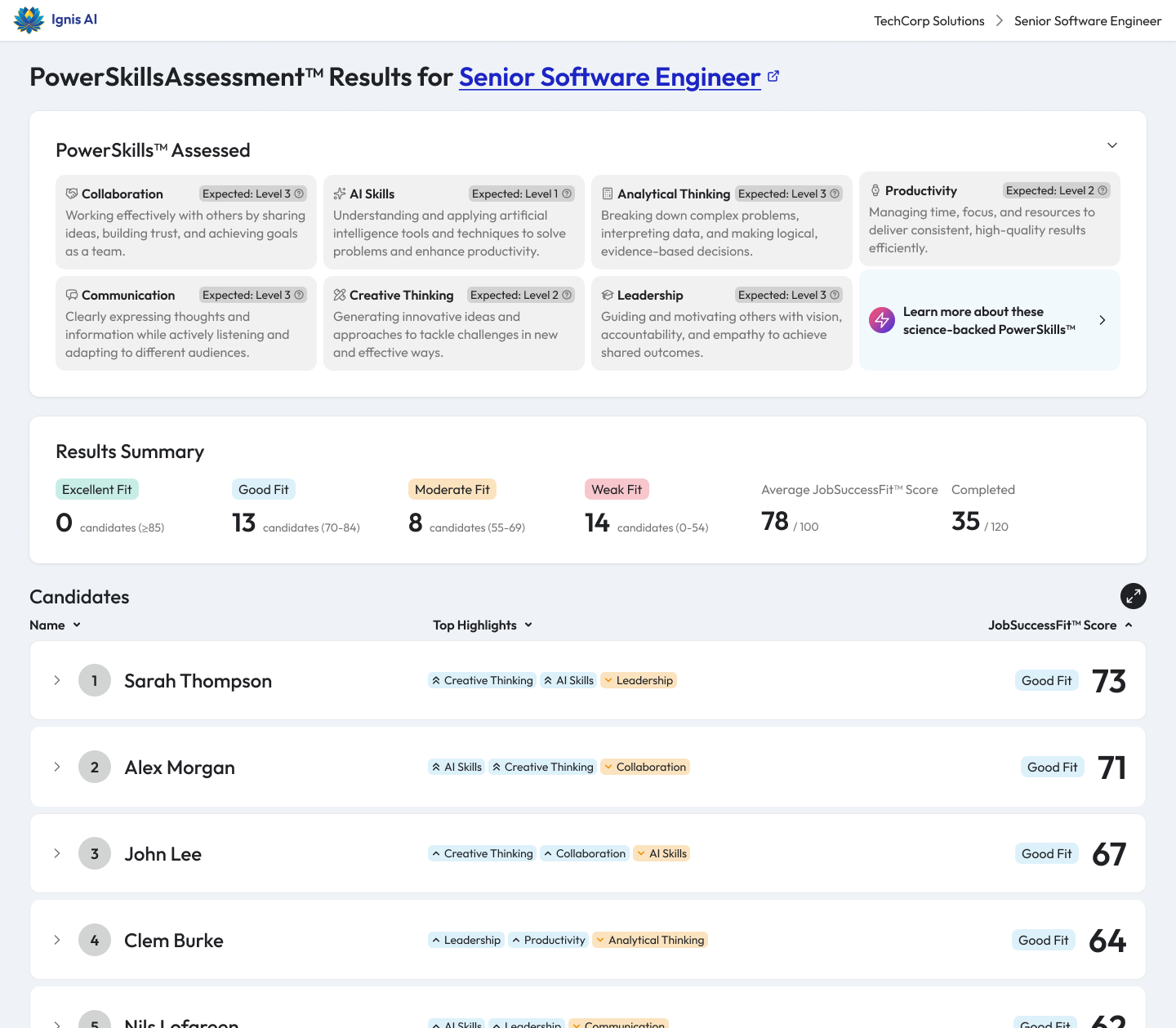

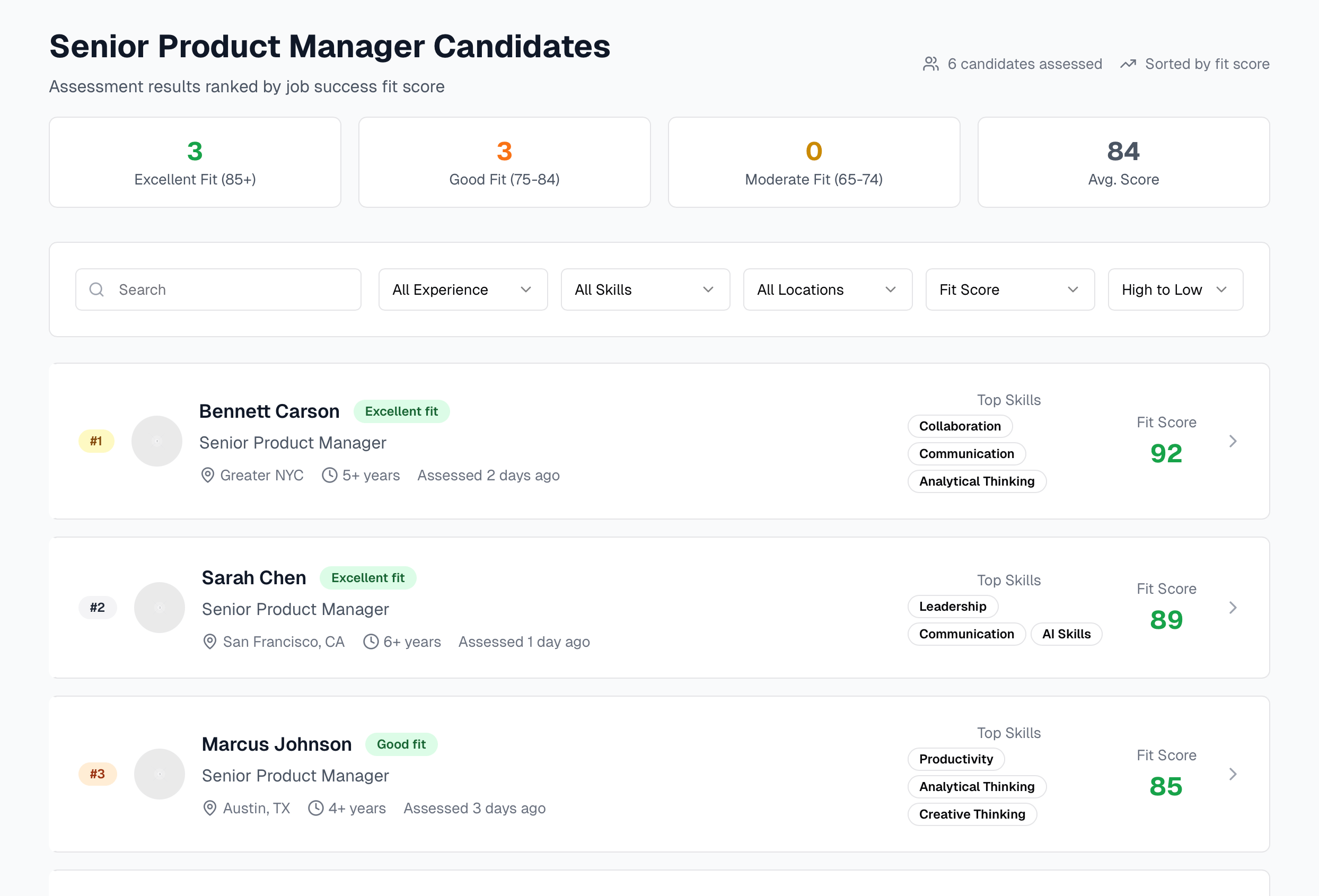

Recruiter Results View Concept Testing (16 Sessions)

Research Goals

- Can recruiters interpret the JobSuccessFit score?

- Does it improve decision speed?

- Does it feel trustworthy?

What We Found

- Strong interest in structured behavioral data.

- Immediate skepticism toward opaque scoring.

- 0–3 scale confused users (“Is this like 2/3 correct?”).

- “Move to next stage” CTA triggered risk anxiety.

- Recruiters demanded scoring transparency and weighting flexibility.

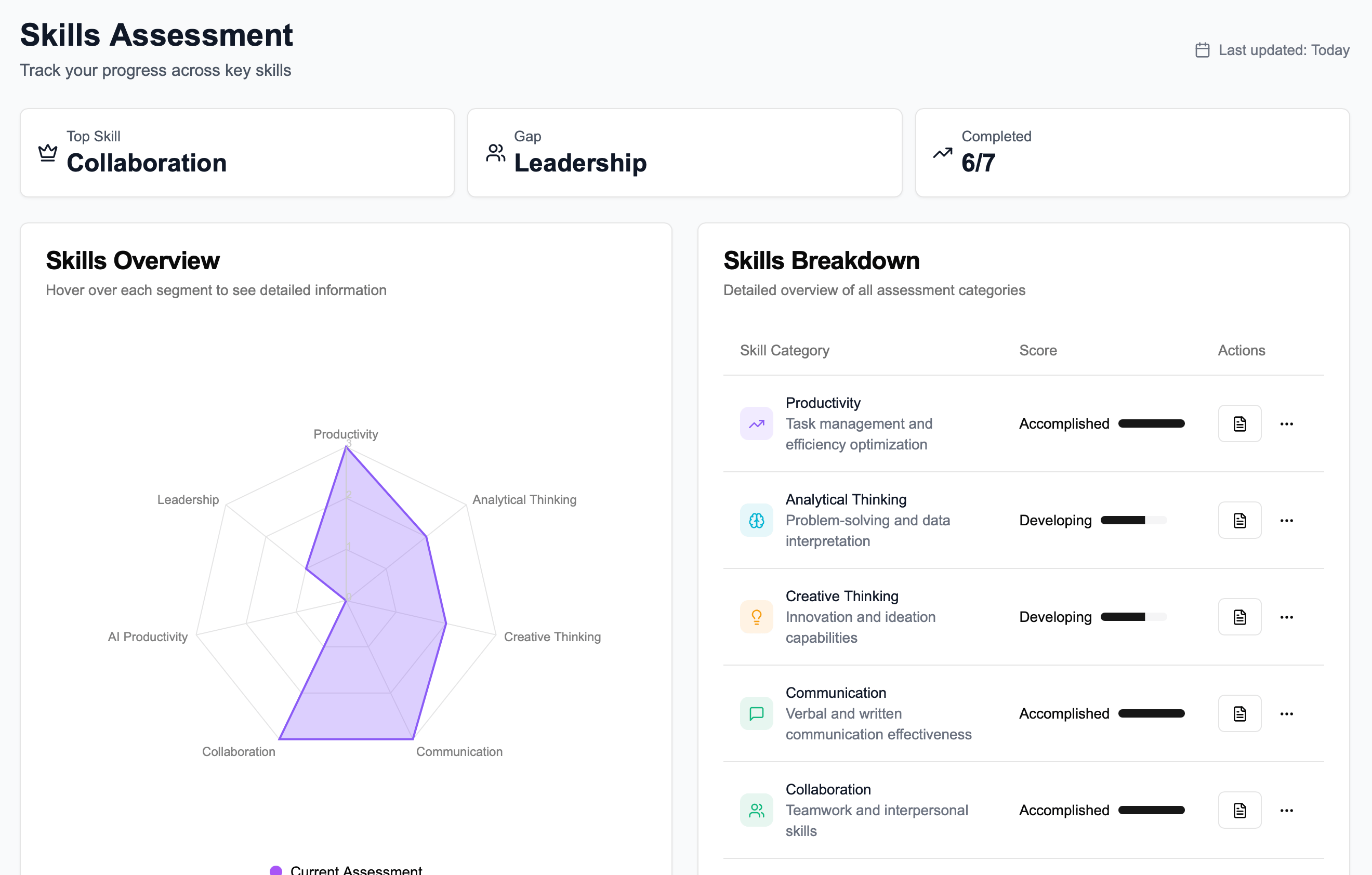

Product Changes Driven by Research

- Switched scoring from 0–3 to 0–100.

- Elevated Skills Analysis higher in hierarchy.

- Added clearer score breakdown explanations.

- Reworded high-risk CTAs to reduce perceived irreversibility.

- Reframed “culture fit” language to avoid exclusionary framing.

- Explored adjustable weighting by role.

- Improved comparison tooling for multi-candidate review.

These changes aligned the product with recruiters’ mental models rather than academic assessment logic.

Design Efficiency

To increase our velocity of iterations and research under time and runway pressure, I:

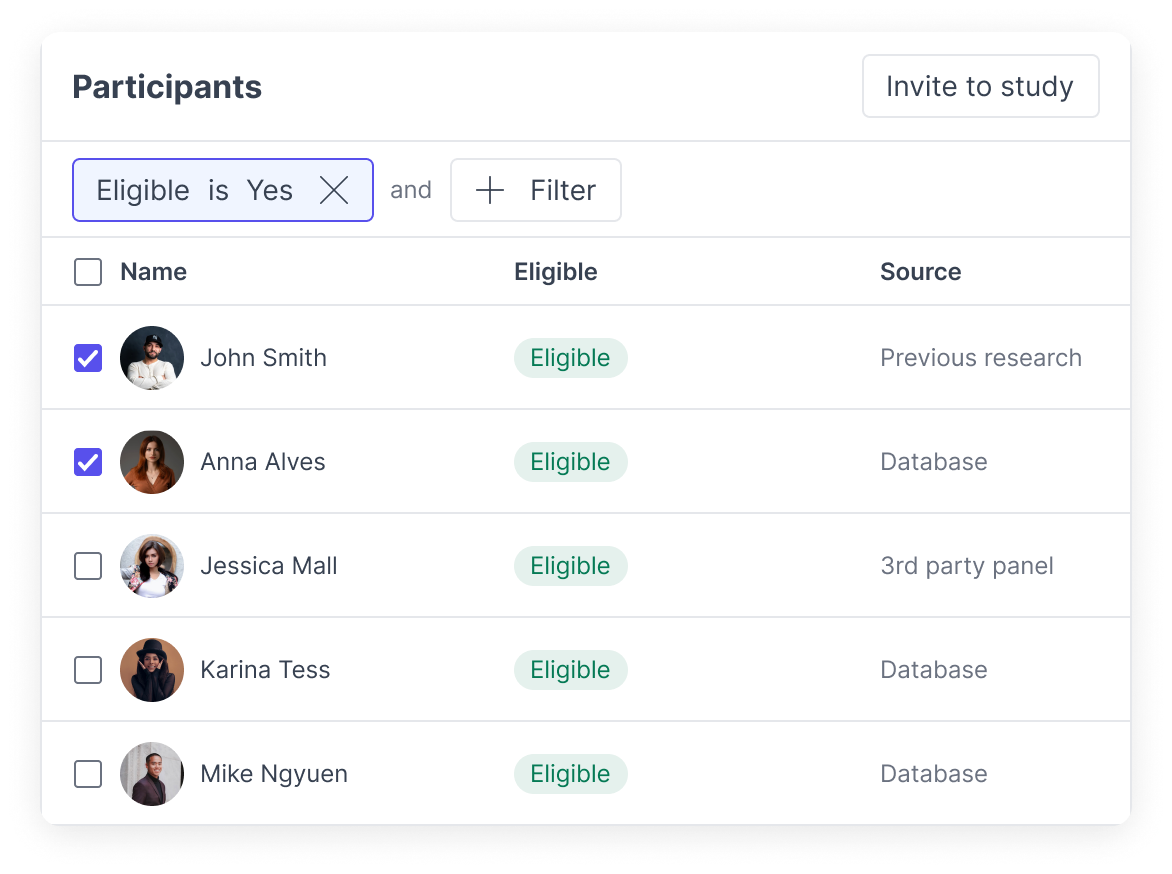

Built a Pre-Recruiting Pipeline

Instead of recruiting from scratch for each study, we:

- Recruited ahead of research.

- Built warm participant relationships.

- Maintained an active HR panel.

This removed typical 1–2 week recruiting delays per study and let us start building the relationships that ultimately became design partners.

Compressed Prototype Cycles

- Used AI-assisted prototyping workflows, primarily with V0.dev

- Delivered externally testable prototypes in under one week.

Established Cross-Functional Cadence

- Regular executive readouts.

- Tight coordination with PM + Engineering.

- Prevented discovery from becoming a bottleneck.

Adoption Signal

- Secured 5 pilot design partners (10–200 employees).

- Verbal commitments to pilot.

- Established early case-study pipeline (pending pricing strategy).

While long-term hiring outcome data required 3+ months, early signal indicated strong interest when transparency and workflow fit were addressed.

What I’d Do Differently

If starting over / as CEO

- Test earlier in real-world scenarios with low-tech solutions (eg deliver assessments via google forms, score manually, deliver results to clients in a slide deck). I believe this would have sharpened our focus earlier, and let us learn and build more efficiently. There’s no substitute for real-world scenarios.

- Run backtesting pilots where we assess current employees and correlate those assessment results with manager performance reviews, to help establish credibility and show specific organizations how we can help them discover candidates who are similar to their best employees.